I am seeing a lot of fearmongering and misinformation regarding recent events (CSAM being posted in now closed large lemmy.world communities). I say this as someone who brought attention to this with other admins as I noticed things were federating out.

Yes, this is an issue and what has happened in regards to CSAM is deeply troubling but there are solutions and ideas being discussed and worked on as we speak. This is not just a lemmy issue but an overall internet issue that affects all forms of social media, there is no clear cut solution but most jurisdictions have some form of safe harbor policy for server operators operating in good faith.

A good analogy to think of here is if someone was to drop something illegal into your yard that is open to the public. If someone stumbled upon said items you aren’t going to be hunted down for it unless there is evidence showing you knew about the items and left them there without reporting them or selling/trading said items. If someone comes up to you and says “hey, there’s this illegal thing on your property” you report it and hand it over to the relevant authorities and potentially look at security cameras if you have any and send them over with the authorities then you’d be fine.

A similar principle exists online, specifically on platforms such as this. Obviously the FBI is going to raid whoever they want and will find reasons to if they need to, but I can tell you for near certainty they probably aren’t as concerned with a bunch of nerds hosting a (currently) niche software created by 2 communists as a pet project that gained popularity over the summer because a internet business decided to shoot itself in the foot. They are specifically out to find people who are selling, trading, and making CSAM. Those that knowingly and intentionally distribute and host such content are the ones that they are out for blood for.

I get it. This is anxiety inducing especially as an admin, but so long as you preserving and reporting any content that is brought to your attention in a timely manner and are following development and active mitigation efforts, you should be fine. If you want to know in more detail click the link above.

I am not a lawyer, and of course things vary from country to country so it’s a good idea to check from reputable sources on this matter as well.

As well, this is a topic that is distressing for most normal well adjusted people for pretty obvious reasons. I get the anxiety over this, I really do. It’s been a rough few days for many of us. But playing into other peoples anxiety over this is not helping anyone. What is helping is following and contributing the discussion of potential fixes/mitigation efforts and taking the time to calmly understand what you as an operator are responsible for within your jurisdiction.

Also, if you witnessed the content being discussed here no one will fault you for taking a step away from lemmy. Don’t sacrifice your mental health over a volunteer project, it’s seriously not worth it. Even more so if this has made you question self hosting lemmy or any other platform like it, that is valid as well as it should be made more clearer that this is a risk you are taking on when making any kind of website that is connected to the open internet.

While there is merit to your post, I will point out the obvious: your post is hosted on an instance named “lemmy world” and you are citing US enforcement codes and sections, but lemmy.world (the servers) do not reside inside the United States. Lemmy.world (the server) would be subject to the laws of the country in which it is hosted, the admin team would be subjected to the laws in the countries in which they reside, the community moderators would be subject to the laws in the countries they reside, and the lemmy.world users would be subject to the laws frrom where ever they reside.

So, you can’t simply link to a writeup about some US regulations and assume it’s going to be exactly the same everywhere and for everyone.

Absolutely but this is a good basis to work from.

No no, America is the world. You didn’t know?

I was selfhosting a lemmy instance just for myself, to be able to connect and post to other instances. I had no communities or users. I ended up shutting it down because at the end of the day, I just don’t want stuff like CSAM making its way onto my home server because someone with ill intent bombarded a server I’m federated with. I still believe in the fediverse and lemmy in general, and no, I don’t think I’m going to get raided and arrested. But the fear just outweighed the pleasure I got from my little selfhosting project. I’m happy that other instances are up and running, and that I can post from them. I’ll also be excited to redeploy a personal server in the future when I feel that development has progressed enough that the risk to myself is low enough to still find pleasure in the project.

And that is completely understandable. Everyone has their own risk tolerance like @[email protected] said. The removal of caching remote images is coming soon, like most likely days away from release into lemmy. And although it is not the only tool we need it is a step in the right direction.

understandable, everyone has their own risk tolerance

4chan exists and continue to thrive. That is enough proof that many Lemmy Mods are over reacting. Hiromoot is a one man show too.

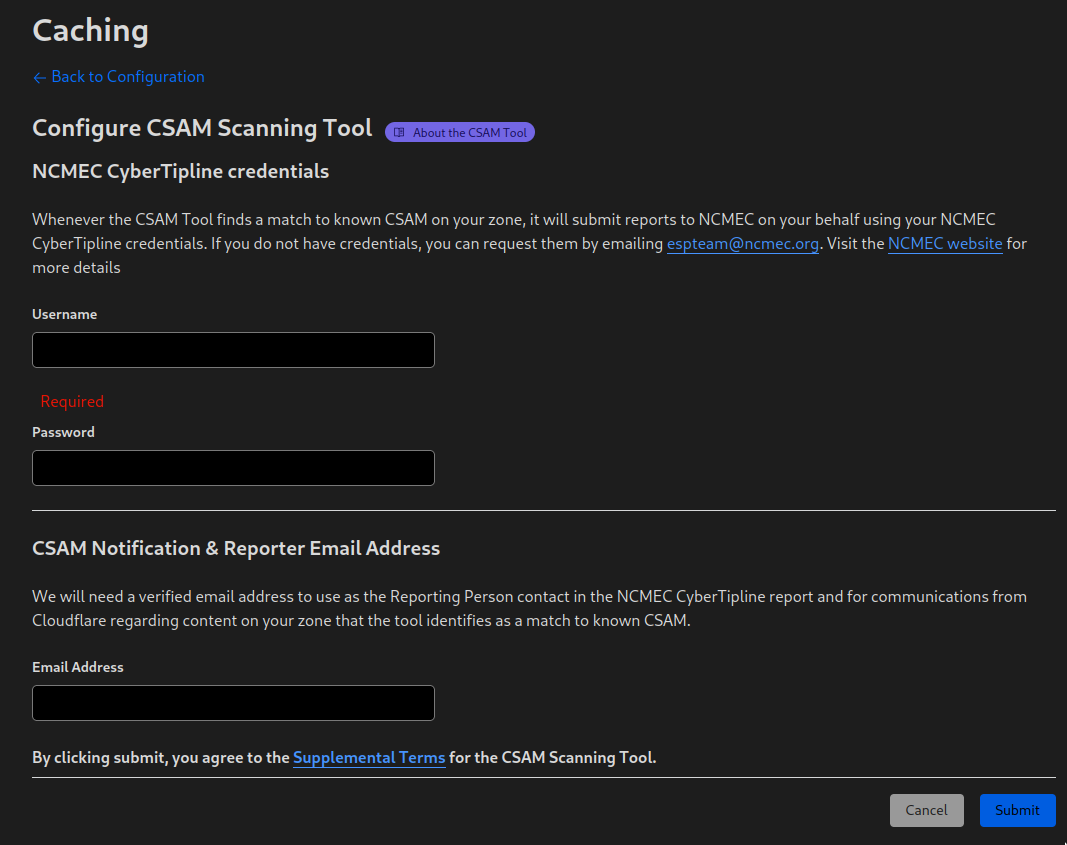

Does Cloudflare’s CSAM scanning tool help at all for instances using Cloudflare?

Yes. But only if you are in the US and get an API key from NCMEC. They are very protective of who gets the keys and require a zoom call as well. There is hope cloudflare will integrate with other countries database as well but we will see. There is also active discussion of deals to provide hash scanning software to fediverse instances more easily as well.

… only if you are in the US and get an API key from NCMEC. They are very protective of who gets the keys and require a zoom call as well.

Do you have a source for these statements, because they directly contradict the Cloudflare product announcement at https://blog.cloudflare.com/the-csam-scanning-tool/ which states:

Beginning today, every Cloudflare customer can login to their dashboard and enable access to the CSAM Scanning Tool.

… and shows a screenshot of a config screen with no field for an API key. Some CSAM scanners do have fairly limited access, but Cloudflare’s appears to be broadly available.

I’m not from the US and I tried requesting for one (NCMEC credentials) and this is what they told me:

if your company or site is located internationally, we are unable to register you at this time

The CSAM scanning tool didn’t require NCMEC credentials back then. At least that’s the context I’m getting from this short thread in 2021.

I also tried looking into other tools like PhotoDNA but it also isn’t available in my country.

edit: just to add, the blog post you linked is old (2019). They changed the UI. If you log into the Cloudflare dashboard, it looks like this:

And yes, they do require a 30 minute Zoom call according to the email exchange I had with the NCMEC.

Why are they protective of the keys? Why not just make it available so csam removal can be easily automated?

Can CSAM distributors use it as a test suite for workarounds?

Edit: first draft was too declarative where I meant to pose the thought as a question.

Yes. They can stress test it if it is not under tight lock and key. It is why these databases are so heavily guarded and every new user for it are heavily vetted.

There should be a full write up from a lawyer - or, better yet, an organization like the EFF. Because lemmy.world is such a prominent instance, it would probably garner some attention if the people who run it were to approach them.

People would still have to decide what their own risk tolerances are. Some might think that even if safe harbor applies, getting swatted or doxxed just isn’t worth the risk.

Others might look at it, weigh their rights under the current laws, and decide it’s important to be part of the project. A solid communication on the specific application of S230 to a host of a federated service would go a long way.

I worked as a sys admin for a while in college in the mid-90s, and it was a time when ISPs were trying to get considered common carriers. Common carrier covers phone companies from liability if people use their service to commit crimes. The key provision of common carrier status was that the company exercised no control whatsoever over what went across their wires.

In order to make the same argument, the systems I helped manage had a policy of no policing. You could remove a newsgroup from usenet, but you couldn’t any other kind of content oriented filtering. The argument went that as soon as you start moderating, you’re now responsible for moderating it all. True or not, that’s the argument made and policy adopted on multiple university networks and private ISPs. And to be clear, we’re not talking about a company like facebook or reddit which have full control over their content. We’re talking things like the web in general, such as it was, and usenet.

Usenet is probably the best example, and I knew some BBS operators who hosted usenet content. The only BBS owners that got arrested (as far as I know) were arrested for being the primary host of illegal material.

S230 or otherwise, someone should try to get a pro bono from a lawyer (or lawyers) who know the subject.

Edit: Looks like EFF already did a write up. With the amount of concerned people posting on this optic, this link should be in every official reply and as a post in the topic.

Your link markdown is in reverse.

Yeah, my client crashed when I was trying to edit it. Thanks for the reminder!

For anyone who’s uncomfortable about the possibility of serving CSAM from their instance, just block pictrs from serving any image by adding this to lemmy nginx config, at least until this pull request merged and included in the future lemmy version.

location ^~ /pictrs/ { return 404; }503 would probably be more accurate since it’s a server side error saying it’s not available compared to 404 not found.

451 or 403 would be more appropriate as it’s not available for legal reasons. 410 Gone would also fit well if it’s a permanent block. I’d steer clear of 5xx server side because it encourages retry-later. The client has requested something not served, firmly placing it into the 4xx category. The other problem with 503 in particular is that it indicates server overload, falsely in the case of a path ban.

I mean depends on if they want to do permanent or not, but the comment I replied to said at least until the issue / PR and I assume that change will be prioritized by the community and out before we know it.

I do hope so. Temporary things have a stickiness that makes them semi-permanent. May as well go with 418 then :o)

I think its also a good prompt, as a self hoster, to assess what services you are hosting and what kind of risk profile that exposes you to. Making yourself aware of any regulations or legal implications and their potential consequences (if any) may mean that self hosting a service becomes much less fun/cool and not worth it.

To expand the conversation; NOTE: I am NOT a Lawyer

People hosting a federated instance in Australia would likely be classed as a Social Media service and be bound by the relevant safety code on the eSafety commissioners site here: https://www.esafety.gov.au/industry/codes/register-online-industry-codes-standards. This is planned to take effect in December 2023 but serves as a guide.First perform an assessment on your risk factor to determine a Tier (1,2,3) which dictates your required actions. Services that assess between tiers should assume higher risk, which means, potentially, you may be classed higher risk due to the general nature of the content (its not a club so conversation is around a specific topic).

Minimum compliance (assuming you are classed as a Tier 3 Social Media Service)

Section 7, Objective 1, Outcome 1.1 and Outcome 1.5:Should you be determined to be Tier 2 or 1, there are a whole raft of additional actions including ensuring you are staffed to oversee the safety (1.4), and child account protections (1.7) (preventing unwanted contact), and active detection of CSAM material (1.8)

1.1

Notifying appropriate entities about class 1A material on their services

If a provider of a social media service:

a) identifies CSEM and/or pro-terror materials on its service; and

b) forms a good faith belief that the CSEM or pro-terror material is evidence of serious

and immediate threat to the life or physical health or safety of an adult or child in

Australia,

it must report such material to an appropriate entity within 24 hours or as soon as

reasonably practicable.

An appropriate entity means foreign or local law enforcement (including, Australian

federal or state police) or organisations acting in the public interest against child sexual

abuse, such as the National Centre for Missing and Exploited Children (who may then

facilitate reporting to law enforcement).

Note: Measure 1 is intended to supplement any existing laws requiring social media service providers

to report CSEM and pro-terror materials under foreign laws, e.g., to report materials to the National

Centre for Missing and Exploited Children and/or under State and Territory laws that require reporting

of child sexual abuse to law enforcement.

Guidance:

A provider should seek to make a report to an appropriate entity as soon as reasonably

practicable in light of the circumstances surrounding that report, noting that the referral of

materials under this measure to appropriate authorities is time critical. For example, in

some circumstances, a provider acting in good faith, may need time to investigate the

authenticity of a report, but when a report has been authenticated, an appropriate authority

should be informed without delay. A provider should ensure that such report is compliant

with other applicable laws such as Privacy Law.1.5

Safety by design assessments

If a provider of a social media service:

a) has previously done a risk assessment under this Code and implements a significant

new feature that may result in the service falling within a higher risk Tier; or

b) has not previously done a risk assessment under this Code (due to falling into a

category of service that does not require a risk assessment) and subsequently

implements a significant new feature that would take it outside that category and

require the provider to undertake a risk assessment under this Code,

then that provider must (re)assess its risk profile in accordance with clause 4.4 of this Code

and take reasonable steps to mitigate any additional risks to Australian end-users

concerning material covered by this Code that result from the new feature, subject to the

limitations in section 6.1 of the Head Terms.

What does csam mean?

Child Sexual Abuse Material

Also, are the images even federated? I know the current line of thinking is that they are, but I could not find them in my local pictrs volume. Not that I wanted to, mind you. But I looked and only saw one picture in there from the problematic time period, and it happened to be one of my user’s avatars. And one of the CSAM posts federated with me, I know for a fact, because I saw the comments even though I couldn’t see the picture (and I feel horrible for those users who saw it, some of them were obviously traumatized).

I’m keeping a close eye on my pictrs volume and really scrutinizing who I allow on my instance after this whole thing, but on the whole, I’m not overly concerned, even as a US-based self-hoster. I registered with the DMCA and will fully comply with any and all takedown requests, even silly ones like copyright. I don’t have the finances or time for prolonged legal battles.

Edit: Figured it out. My pictrs container didn’t have an external network definition, so it was timing out while retrieving external images.

Right now all images are cached by default, even external ones. A copy is downloaded into pictrs. A fix is being worked on to disable that very very soon soon. It was being worked on before this due to storage cost issues ballooning long term but yeah.

Huh, do I have that misconfigured by some happy accident? My pictrs volume is only around 50Mb after running my instance for over a month. I have both LCS and Lemmony federating popular content, too…

deleted by creator

Yes, I’ve got community icons and avatars working. Which are actually the only things I see in my pictrs volume.

I have to regularly clear the cache on my devices for Memmy as it will continue growing (was beyond 4GB when I noticed one time eating my phones space). I’m assuming it’s saving images on device too.

It likely is, horribly optimized mess of a software right now

They are. We’ve manually removed these images from our instance (and are following the guidelines provided by local laws).

Acronyms, initialisms, abbreviations, contractions, and other phrases which expand to something larger, that I’ve seen in this thread:

Fewer Letters More Letters CF CloudFlare HTTP Hypertext Transfer Protocol, the Web IP Internet Protocol nginx Popular HTTP server

3 acronyms in this thread; the most compressed thread commented on today has 8 acronyms.

[Thread #97 for this sub, first seen 31st Aug 2023, 13:15] [FAQ] [Full list] [Contact] [Source code]

Is there a reason why your bot doesn’t define CSAM?

Because it is US-specific, while CP is used world-wide

That doesn’t make any sense… the fact that it’s only used in part of the world makes it even more useful for the bot to define it.

CP is something that’s prevented me from hosting imaging solutions in the past, out of risk-avoidance so I’ve given it a lot of thought over the years. The lack of support from Cloudflare hasn’t helped, and making it USA-only weakens it as a general solution. That said, I’ll still run some sites via Cloudflare because I’m certain it tracks the content regardless without the mandate to enforce or alert, and that tracking may help lead to the original source [pure opinion here with hard facts, but I use CF for other reasons].

Now that I want to host fediverse things safely, it’s still a concern. I’m not in the US, I’m in the UK and host in Canada. Doesn’t matter greatly. They’d still take all my equipment while they investigate IF they had sufficient evidence to charge. But they WON’T because the CP is attributable to someone else. The main takeaway from all of this, for me, is to NEVER take backups of actual content, only settings/accounts. Holding archives is dangerous because only I would have access to their contents.

Defederate aggressively, block paths as needed, keep logs, don’t run it from home, etc etc. Keeping records gets most folk out of sticky legal situations.

The law also expands criminal and civil liability to classify any online speaker or platform that allegedly assists, supports, or facilitates sex trafficking as though they themselves were participating “in a venture” with individuals directly engaged in sex trafficking.

Yeah this part worries me. Those who self host and end up with CSAM unknowingly from federated instances could be charged as if they were the malicious actors themselves.

I can’t speak for everyone else, but I know I was taken aback by the prospect that selfhosting meant I’d be caching copies of media uploaded to other instances without any way to opt out of that. It’s one thing to provide links, it’s another thing to be functioning as a knockoff CDN. That’s a bit more than I’m willing to do for the sake of a vanity instance.

You can’t turn pictrs off as a configuration setting?

If you use nginx it seems this does the trick by no longer serving images.

location ^~ /pictrs/ { return 404; }Will that screw up displaying things that would be cached from other instances?

Seems it will serve a 404 instead of Any image.

Mmmmm that’s unlikely. We would see lots of social media websites go down if that was the case.

The root issue here, when your local police department knocks down your door with guns drawn in the US, after you were anonymously reported to the feds-

They aren’t asking questions. If your children don’t get a flashbang to the face during the surprise entry into your home, and your dog doesn’t get shot, you are doing good.

Here in the US, you goto jail first. You get somebody putting fingers up your ass looking for drugs first. You have to post your own bail.

THEN, when you finally get a court date months later, THEN, you can make your case as to why there was CASM content, hosted at your IP.

It is NOT WORTH THE RISK!

Chill with the FUD. As a web host there have been far more cases of random web hosts doors being busted down by the feds for hosting copyrighted material than CSAM they had no reasonable knowledge of.

I don’t think you are helping the case here!

You are just adding another reason as to why I shouldn’t be hosting lemmy from my personal infrastructure.

This is pure raw copium. Dealing with csam is hard, draining, and expensive. Good luck trying to enjoy your vacation while complying with an FBI raid in a time manor.