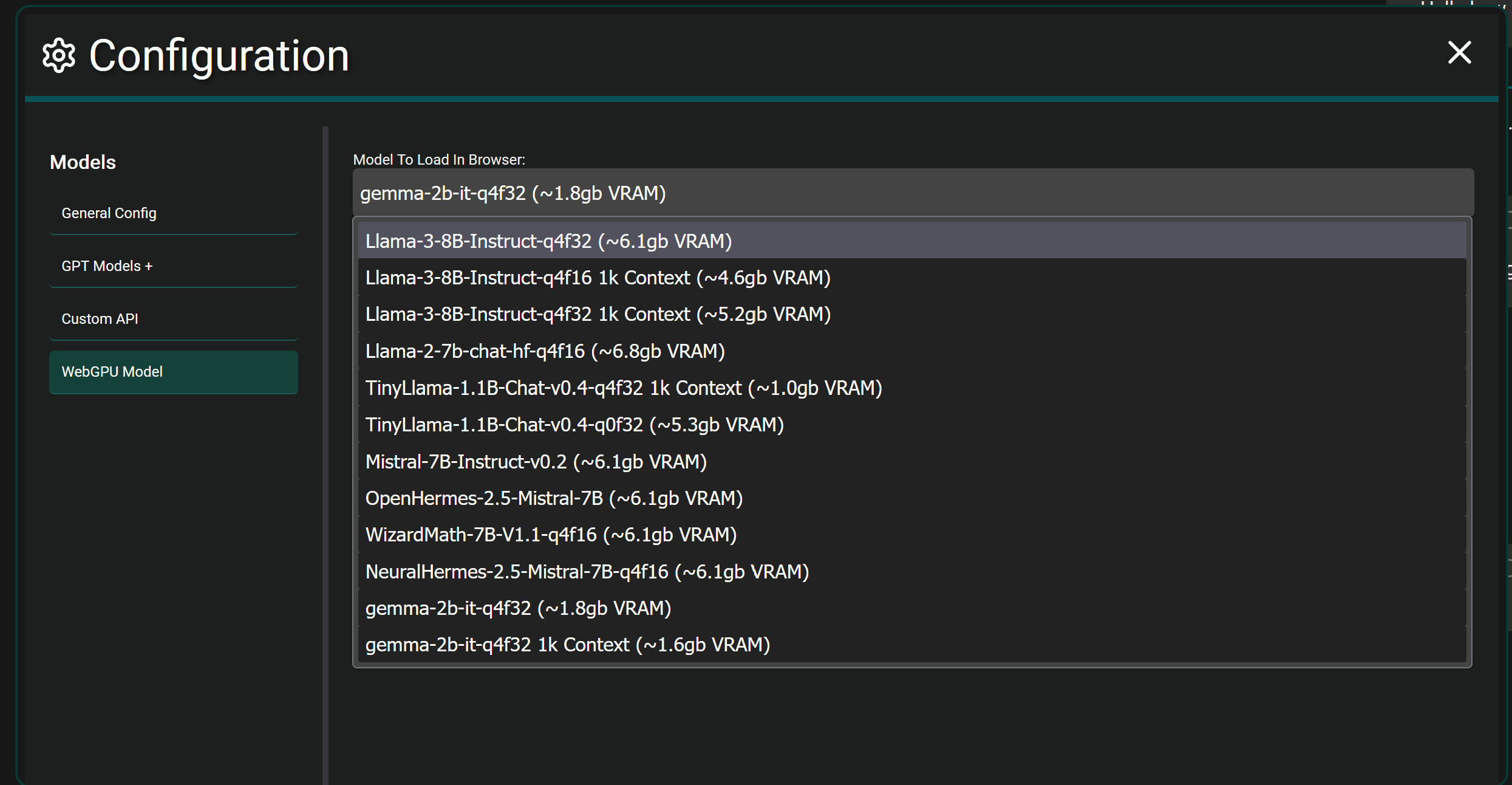

I haven’t personally tried it yet with Ollama but it should work since it looks like Ollama has the ability to use OpenAI Response Formatted API https://github.com/ollama/ollama/blob/main/docs/openai.md

I might give it go here in a bit to test and confirm.

This project is entirely web based using Vue 3, it doesn’t use langchain and I haven’t looked into it before honestly but I do see they offer a JS library I could utilize. I’ll definitely be looking into that!

As a result there is no LLM function calling currently and apps like LM Studio don’t support function calling when hosting models locally from what I remember. It’s definitely on my list to add the ability to retrieve outside data like searching the web and generating a response with the results etc…