Smart to have a buyback clause in the contract, otherwise this would’ve been lost and locked until the patent expired.

Programmer and sysadmin (DevOps?), wannabe polymath in tech, science and the mind. Neurodivergent, disabled, burned out, and close to throwing in the towel, but still liking ponies 🦄 and sometimes willing to discuss stuff.

Smart to have a buyback clause in the contract, otherwise this would’ve been lost and locked until the patent expired.

You say I don’t read… then proceed to explain the same that I already said? Ok.

This is going to get interesting:

The decision imposes a daily fine of R$50,000 (£6,800) on individuals and companies that attempt to continue using X via VPN.

A judge’s ruling on a previous case makes that ruling law.

Previous rulings are a precedent in Common Law systems like the US, UK, Canada, or Australia.

Only Supreme Court rulings become a precedent in Civil Law systems like the EU, Russia,most of the rest of America.

To draw an example, the EU never made a law about cookie splash screens.

A very poor example; Privacy and Electronic Communications Directive 2002/58/EC.

The EU at its top level creates “Directives”, which member states then are bound to transpose into their national Civil Law systems. Judges can interprete that law in different ways, none of which creates a precedent. Only a country’s Supreme Court decision creates a precedent for that country, but even then it can be recurred up to the EU Tribunal, which has the last saying.

Where I am, the news said:

https://en.m.wikipedia.org/wiki/Four_Horsemen_of_the_Infocalypse

Terrorists, pedophiles/child molesters, organized crime like drug dealers, intellectual property pirates, and money launderers are cited commonly

Do we have a BINGO?

It may not be just the Kremlin. I’ve had several cases where I wrote about something in a Telegram chat, stuff I had never talked about before, and in a matter of seconds started seeing related ads on Facebook.

Alternatively, it could be the keyboard leaking all text, or I could have some other spyware, but I’ve only had that happen to me between Telegram and Facebook.

Then again, Telegram group chats are unencrypted, and personal chats are unencrypted by default.

On the bright sight, he also promised Saudi Arabia to build a Hyperloop, also for The Line city in Neom, that’s turning out to be a great way to syphon SA’s oil sales state fund.

But seriously, a Hyperloop would work best on Mars, where the pressure differential would be minimal, while a tube would keep the dust out. Elon’s master plan is still to build a Mars colony with indentured servants under threat of no air. On the way, he’s scamming whoever it takes, and getting any investment or benefit he can land.

narcissistic arsehole

I’ve recently got suckered into a group that turned out to consider calling people “narcissistic” is an “ableist slur… because narcissism is a disability”.

EM is still kind of a real life Tony Stark, the character is not exactly an altruistic philanthropist either.

Well, there is an OpenSource client, and private servers with custom rules. That makes every modification possible.

US company enacting puritanical culture erasure guidelines? What’s new?

If they want their culture preserved, there is peertube and archive.org, but they may have trouble monetizing them.

Crazy talk, and you’re onto something… that’s been solved already.

First part: you hate that a 10+ years old game is only getting cosmetic changes instead of a rehaul of the whole character model. That’s crazy, nobody’s going to do that, not the ones expecting a profit, and not the modding community doing it for free. If you feel it’s a silly change, you’re right, but realize that it’s the only change they could do.

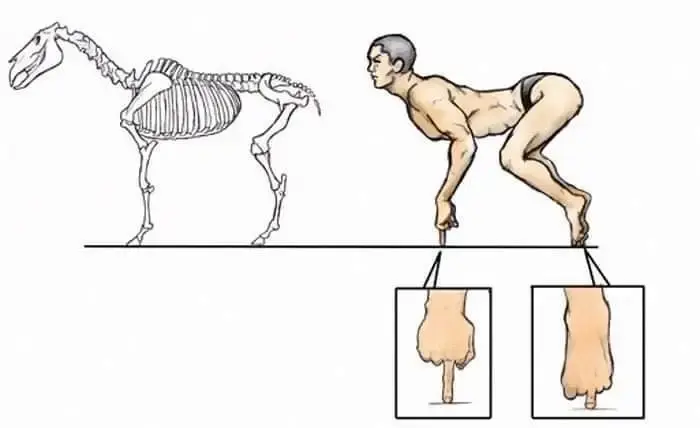

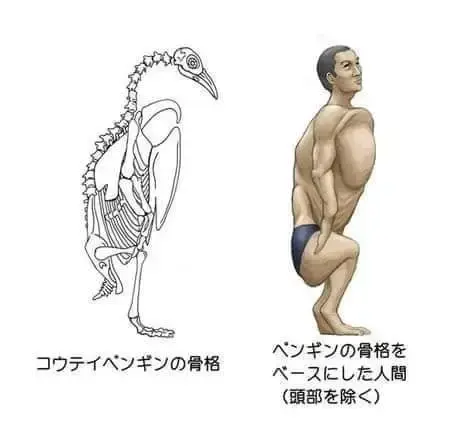

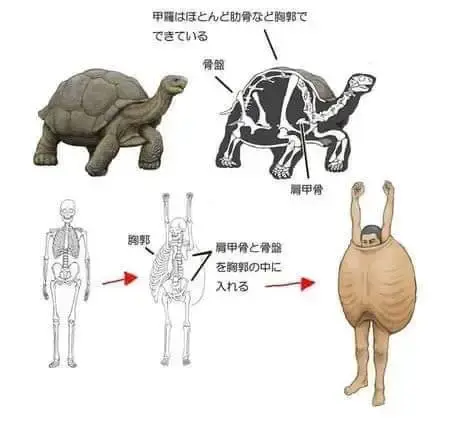

You’re onto something: body feature sliders. Male, female, giraffe, and turtle bodies, have some structural differences, that however mostly match to the same bones having different shapes. The solution is a body shape slider, or 50. It’s something that existed, in some games, since at least the 2000s. Others were lazy and didn’t do it.

For reference of how far this could go, the following all have the same bones, only change in shape, size, and muscle placemen:

Games could have multiple protagonists with different bodies, genders, personalities, etc… something like Overwatch did have that, you could even play as a hamster or a robot!

Fruit of the poisoned tree. Disney’s “until author’s death + 70 years” copyrights are BS, would be nice if nVidia and all the AI companies were to argue to change that.

Maybe… but before sharing files, his priors include selling stolen phone numbers, some shady stock manipulation, an embezzlement sentence, running an illegal hedge fund, and other shenanigans.

Disney stole adapted most of those belowed stories and characters.

As well as copyright infringement, Dotcom faces more serious charges, including money laundering and racketeering. He has long argued that he should not be held liable for copyright infringement carried out using his site

Ok… so what about that money laundering and racketeering, once again?

Really not sure how to feel about this. The copyright infringement damage claims are bonkers, but this guy is not exactly an upstanding citizen either. He already got some jail time in the 90s, fled to NZ, changed his name to a joke, and has been involved in random shady stuff over the last decade.

Good thing the Fediverse is a thing… too bad it isn’t more popular, and with more tooling, but good thing it exists.

consumer cameras were made with a certain type of complexion in mind

Not sure if it’s what you’re talking about, but consumer cameras, and most graphics systems, have been using logarithmic encoding (gamma) to fit a larger dynamic range into a reduced data range… which has the effect of reducing de detail level of larger areas of an image, with the idea that the human eye would struggle to see them anyway. It didn’t have anything to do with complexion, but with pushing technology to a minimally acceptable level on a limited budget. HDR cameras with linear encoding, are still quite expensive, way out of the consumer market range, and it doesn’t seem like that’s going to change too soon.

Lightning Network is not centralized, anyone can run a node with their own wallet. Not everyone will want to, since there are management and safety tasks involved, but that’s up to each one.

Funds are stored in your own wallet… but again, you can use some bank’s wallet if you want to, up to each one.

Transactions are almost instantaneous, no need to wait for the channels to settle. You only need to wait when moving Bitcoin between non-LN and LN wallets or, if running your own node, when a channel closes.

You can find a list of physical stores accepting LN… mostly in El Salvador, but still.

By the time they’re about to go belly up, companies no longer have the resources to ensure they comb through the code to remove the parts licensed from 3rd parties, and the liquidators see all assets as something to sell in order to cover whatever loans the company got.

In an ideal world, consumers would never buy a non-open sourced car, or phone, or IoT device.

In the real world, regulators need to force companies to give consumers at least some basic way to control the products they buy.